Pollinator

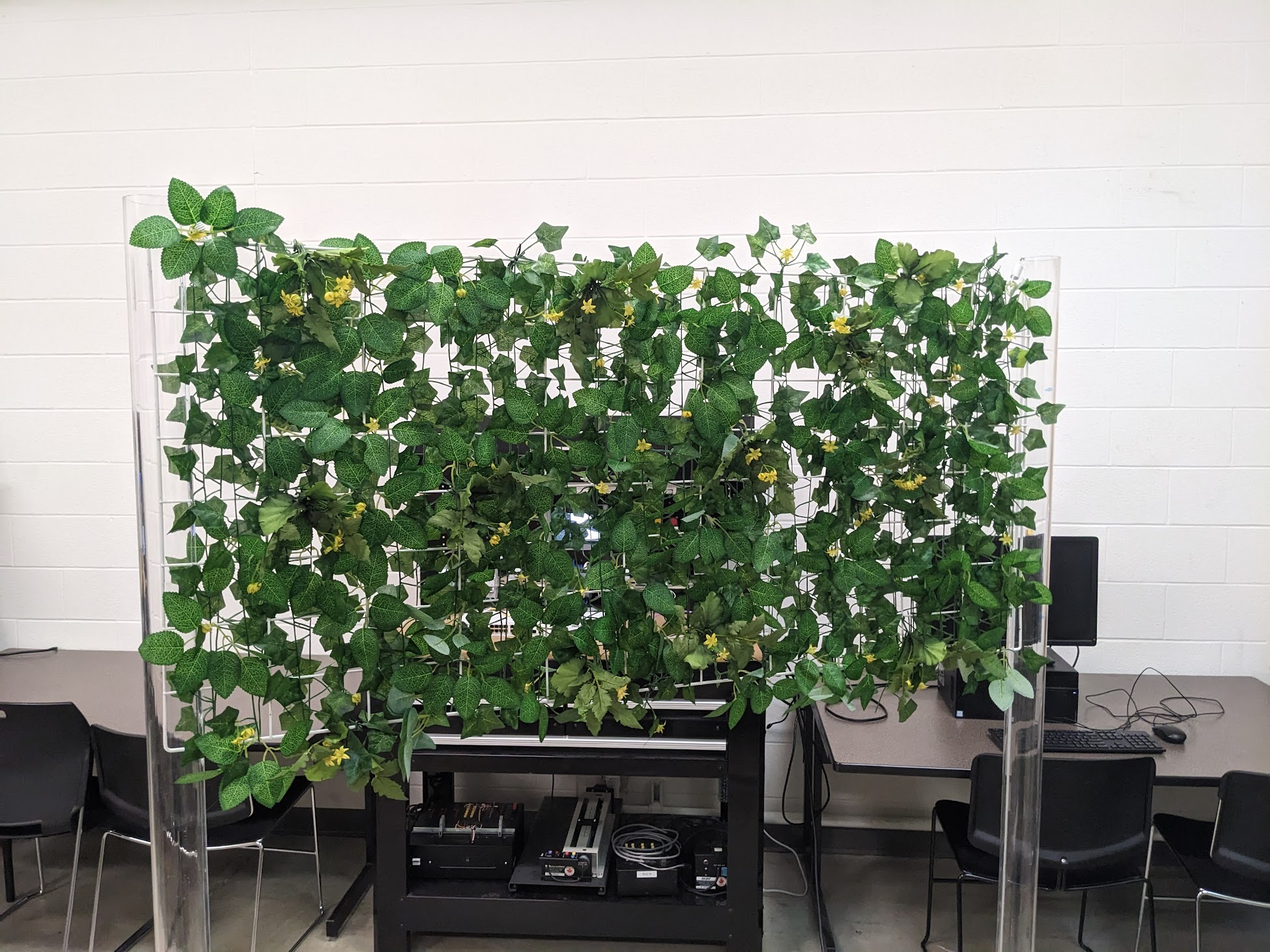

Make a robot arm pollinate flowers. Autonomously. That was the project I landed at the University of Windsor's AI Robotics Centre, and it turned into one of the most technically dense things I've worked on. The setup was a Universal Robots UR3e arm with an Intel RealSense D435i mounted on the end effector, all controlled from Python. A lot of the early work was just getting the basics to behave: reading Universal Robots and Intel RealSensedocs, figuring out the RTDE interface for the arm, and making the camera and robot stable enough to build on. I also designed and 3D printed the custom pollination tool mount on the arm.

Once the hardware was dependable, the real problem became perception. I trained and compared YOLOv8 and Faster R-CNN on a few thousand labeled blossom images, then used YOLOv8 because it was the more practical choice for real-time inference on the edge device. But detection was only half the job. The harder part was the SE(3) hand-eye calibration that let me convert "the camera sees a flower here" into "the arm should move here." I ended up building a 3D coordinate pipeline with depth back-projection, rotation handling, and clustering using DBSCAN to stabilize detections.

Everything eventually had to run on a Jetson Orin Nano, and the annoying deployment problem was going from an x86 laptop development environment to an ARM edge device, which meant rebuilding and revalidating the parts of the stack that relied on native binaries, SDK bindings, and hardware-specific runtimes. I reworked the system into multithreaded capture and control loops so perception and robot motion could stay responsive on-device, and that was what finally made the whole thing feel like a real robot system instead of a demo stitched together on my laptop.

I also spent a lot of time making the work reproducible. That meant building repeatable test setups, documenting how the arm and camera were wired into software, and writing guides for the next researchers on Python arm control, RealSense setup, and the vision pipeline. A lot of the challenge was not just making the robot work once. It was making it understandable enough that someone else could walk into the lab and keep going.